The Spec-Vault: Harness Engineering for AI-Driven Product Development

Harness engineering for AI-driven product development.

25 features in 5 days, 1 engineer, 0 merge conflicts. Same model. Different harness.

The Paradox

The fastest AI project we have ever run produced more structured documentation than any classical project before it. Feature specs, acceptance criteria, audit reports, architecture references, decision logs. At the same time, the engineer wrote less prose than in a single sprint planning session.

25 features. 5 days. One engineer. Not because of better prompts. Because of a system that tells the AI what it needs to know before the conversation begins.

The Wrong Bottleneck

Open session

→ Provide context

→ AI codes

→ Session ends

→ Knowledge gone

→ Next session starts from zero

The industry optimizes the conversation with the AI. Better prompts. Larger context windows. Finer model selection. The silent assumption: the bottleneck is the conversation.

That was correct for 18 months. During that phase, the AI itself was the constraint. Whoever could prompt better got better results. Prompt engineering was the right focus for 2024.

What changed: the models became good enough. Not perfect. But good enough that the individual session is no longer the bottleneck. The bottleneck shifted. Not to the right, toward output. To the left, toward input.

Anyone who develops with AI knows the pattern: the first session produces impressive results. The second session does not know the decisions of the first. The third contradicts the second. After a week you have code, but no product. Features, but no direction. Speed, but no memory.

The fundamental problem: AI sessions are stateless. Every new session starts from zero. Context, decisions, and product strategy live only in the engineer's head. As long as the engineer is the only context store, nothing scales.

The Solution in One Sentence

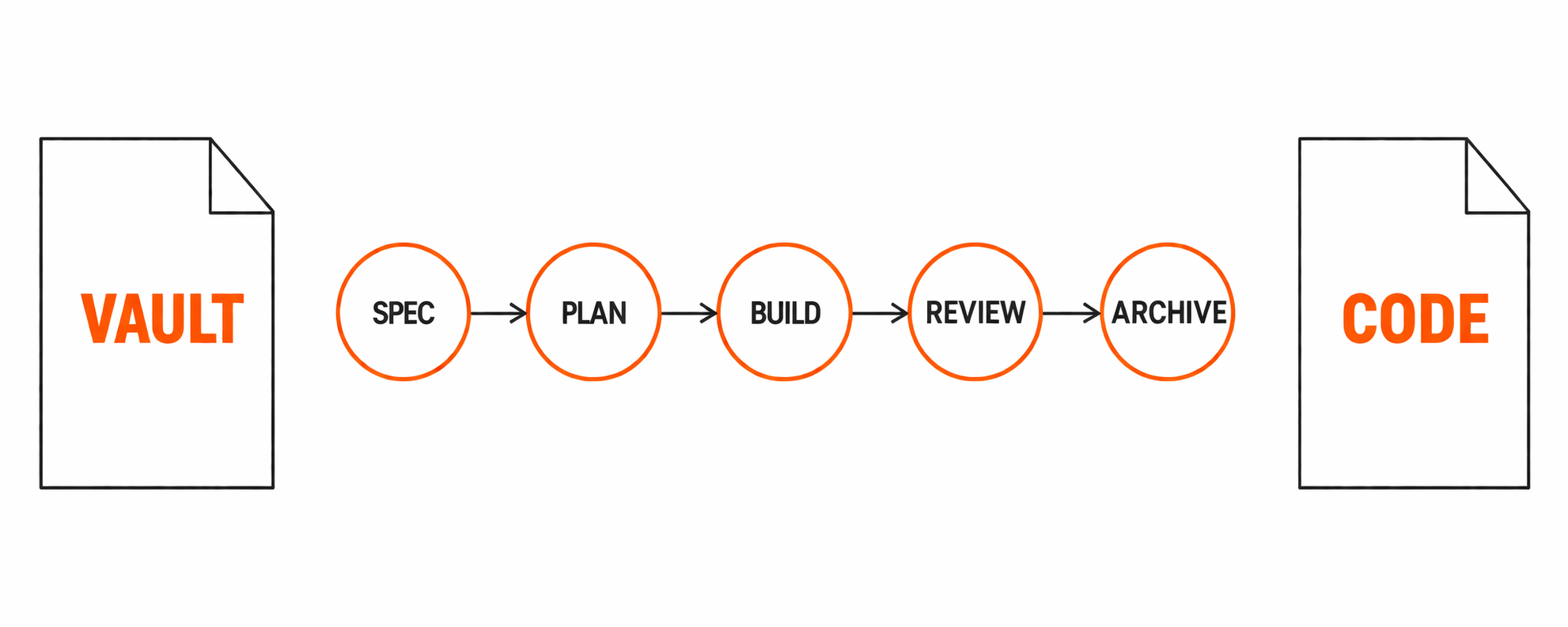

A vault is a git-versioned repository that contains only planning, strategy, and steering. No code. No deployment. Only the question: what should be built and why?

The vault steers a separate code repository through deterministic pipelines. Every AI session reads the vault, executes defined steps, and writes results back. The AI does not improvise. It executes.

The code repository contains the implementation. The vault contains the direction.

| Vault | Code Repository |

|---|---|

| Product strategy, roadmap | Technical architecture |

| Feature specs (what and why) | Implementation (how) |

| Acceptance criteria | Tests and validation |

| Decision log | Code patterns and conventions |

| Audit results | Build and deploy pipeline |

| AI context and rules | Application code |

Both are git-versioned. Both have a complete history. No information lives only in one head or one chat transcript. Versioning gives every decision a history. Branching enables parallel work. Diffing makes changes visible. The audit trail emerges automatically, not through discipline.

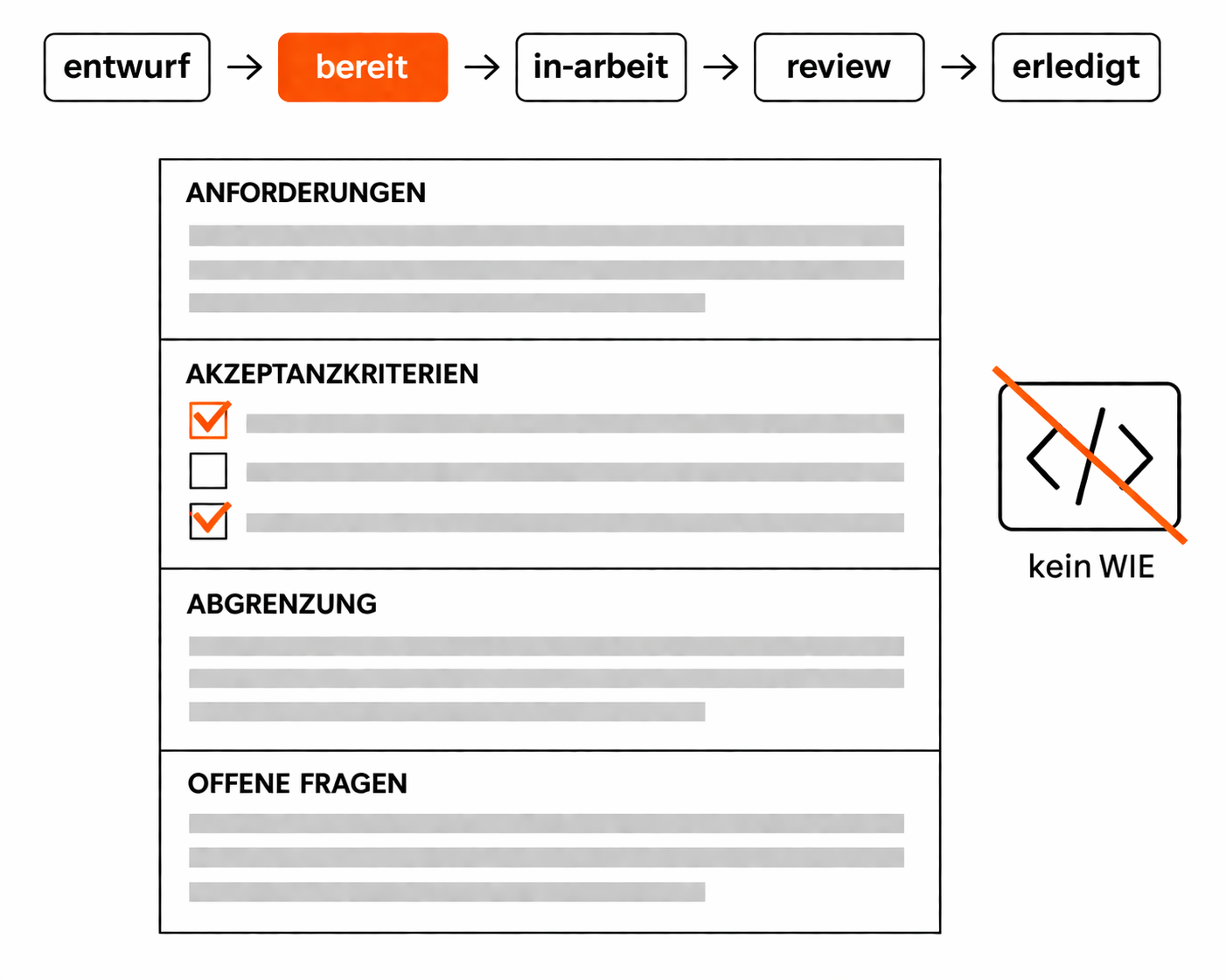

The Spec: Requirement Without Implementation

The core element of the vault is the feature spec. A spec describes what should be built and why. Never the how.

No code examples. No component names. No API paths. No architecture hints. The AI derives the technical implementation from the existing codebase itself. It knows the patterns, the services, the conventions better than the spec author. Technical instructions in the spec would constrain it instead of guiding it.

A spec contains four things:

- Requirements. What the user should be able to do, what should change.

- Acceptance criteria. Measurable, testable conditions for "done".

- Scope boundary. What is explicitly not included.

- Open questions. What must be resolved before implementation.

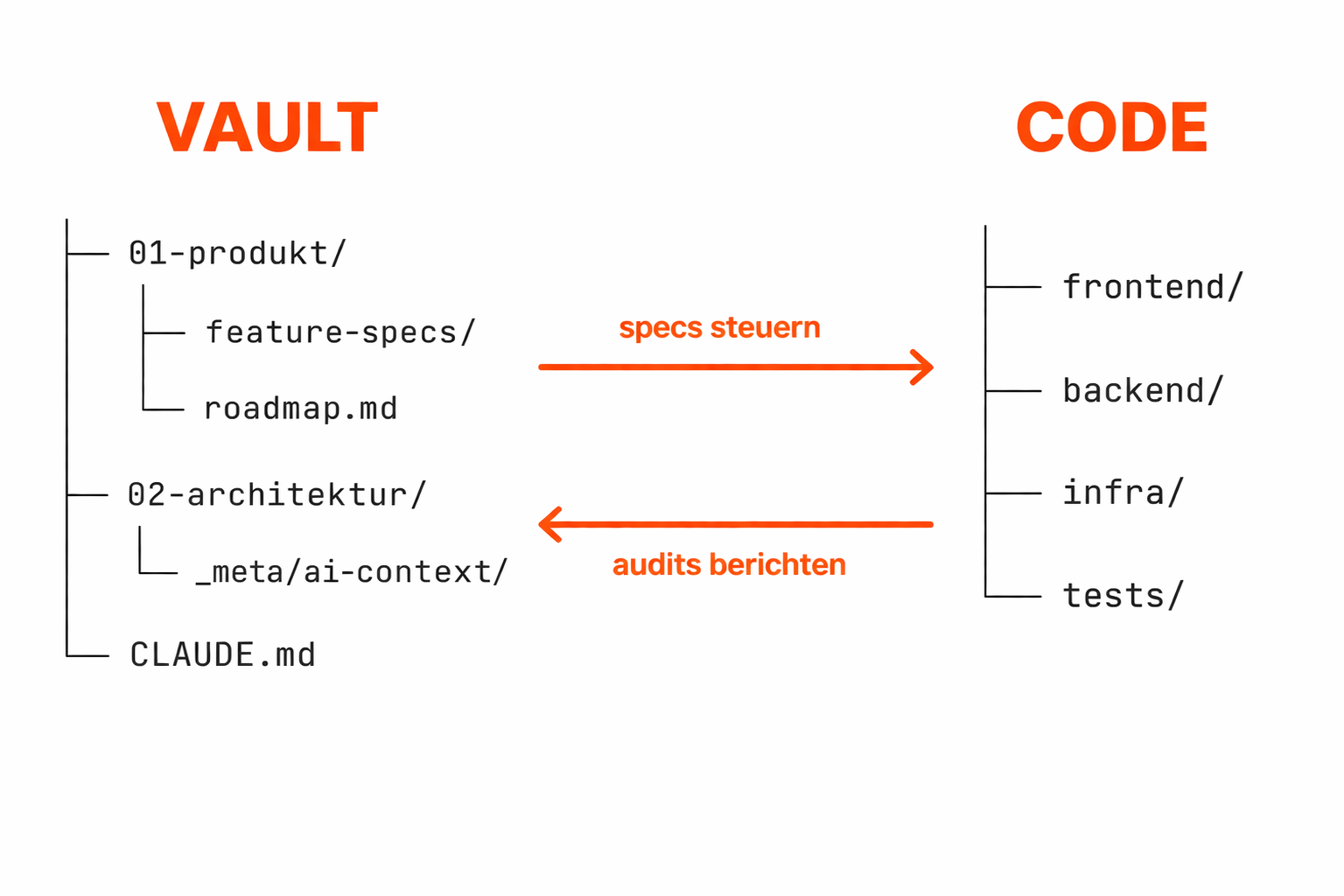

Status Lifecycle With Gates

draft ──→ ready ──→ in-progress ──→ review ──→ done ──→ archive/

└──→ rejected ──→ archive/

Every status transition has prerequisites. Ready means: all open questions resolved, acceptance criteria complete, product owner has given Go. In-progress means: conflicts with other specs ruled out, branch exists, plan is confirmed. Review means: every acceptance criterion individually verified, not just "code compiles".

No transition without a gate. No gate without documentation.

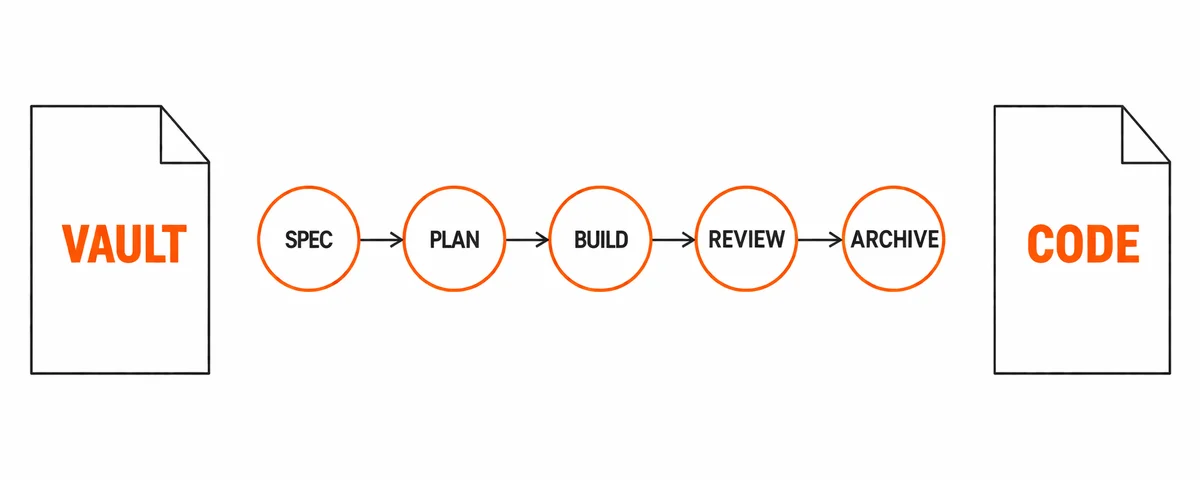

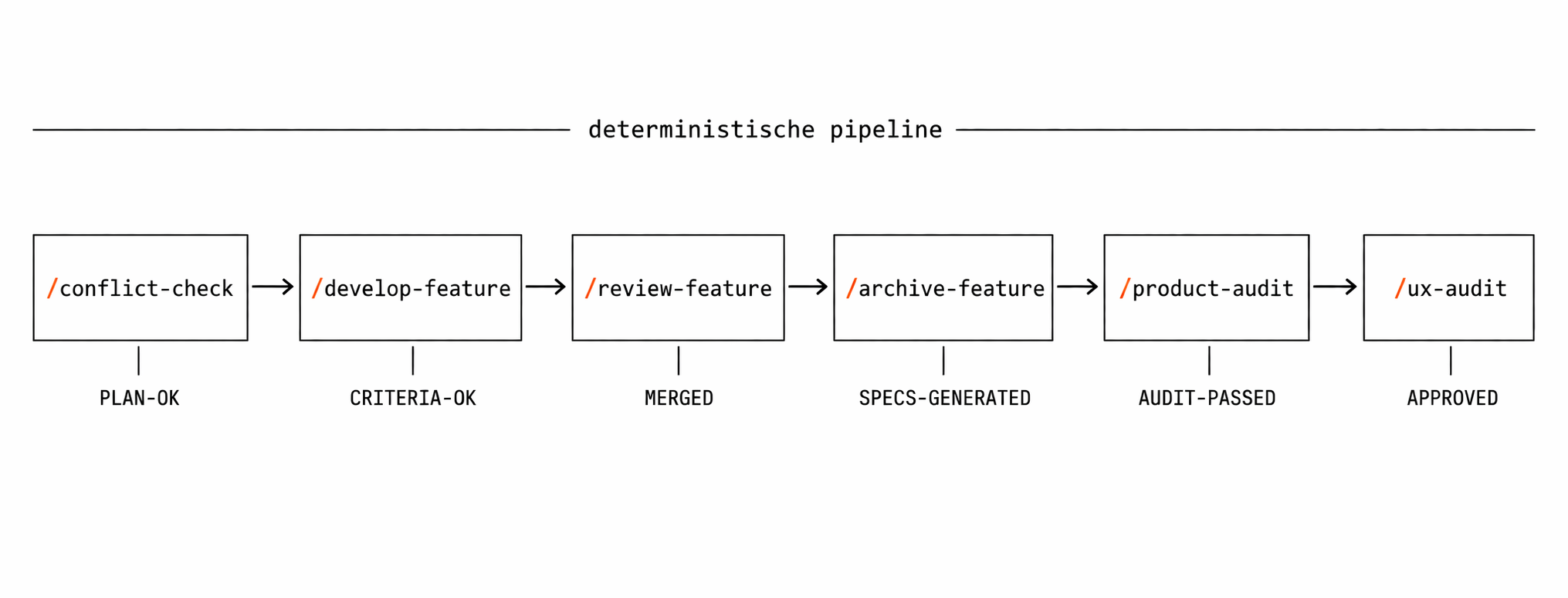

Deterministic Pipelines Instead of Free Conversation

In the vault model, AI sessions are not conversations. They are pipeline executions. Every slash command is a state machine with defined phases and gates. No free prompting, no "just do something".

| Command | Phases | Gate |

|---|---|---|

| /develop-feature | Read spec, check conflicts, create worktree, analyze codebase, create plan, implement, validate | Plan confirmation by PO |

| /review-feature | Check engineering standards, analyze spec drift, visual testing, apply fixes | All acceptance criteria met |

| /product-audit | Visually analyze application, check code against standards, generate specs | Specs with acceptance criteria |

| /ux-audit | Evaluate Nielsen heuristics, journeys per role, build friction map | Improvement specs generated |

| /conflict-check | Load active specs, identify overlaps, compare locked areas | No conflicts or PO decision |

| /archive-feature | Verify branch merge, move spec, update backlog, clean up worktree | Branch merged into main |

/develop-feature as an example: seven phases. Every acceptance criterion individually verified with a note. No "looks fine". No "good enough". The AI reads the spec, creates a plan, waits for confirmation, implements, and then verifies every criterion against the result.

This makes AI development reproducible. Same spec, same process, comparable result. Not "the AI happened to deliver well this time". The process delivers reliably.

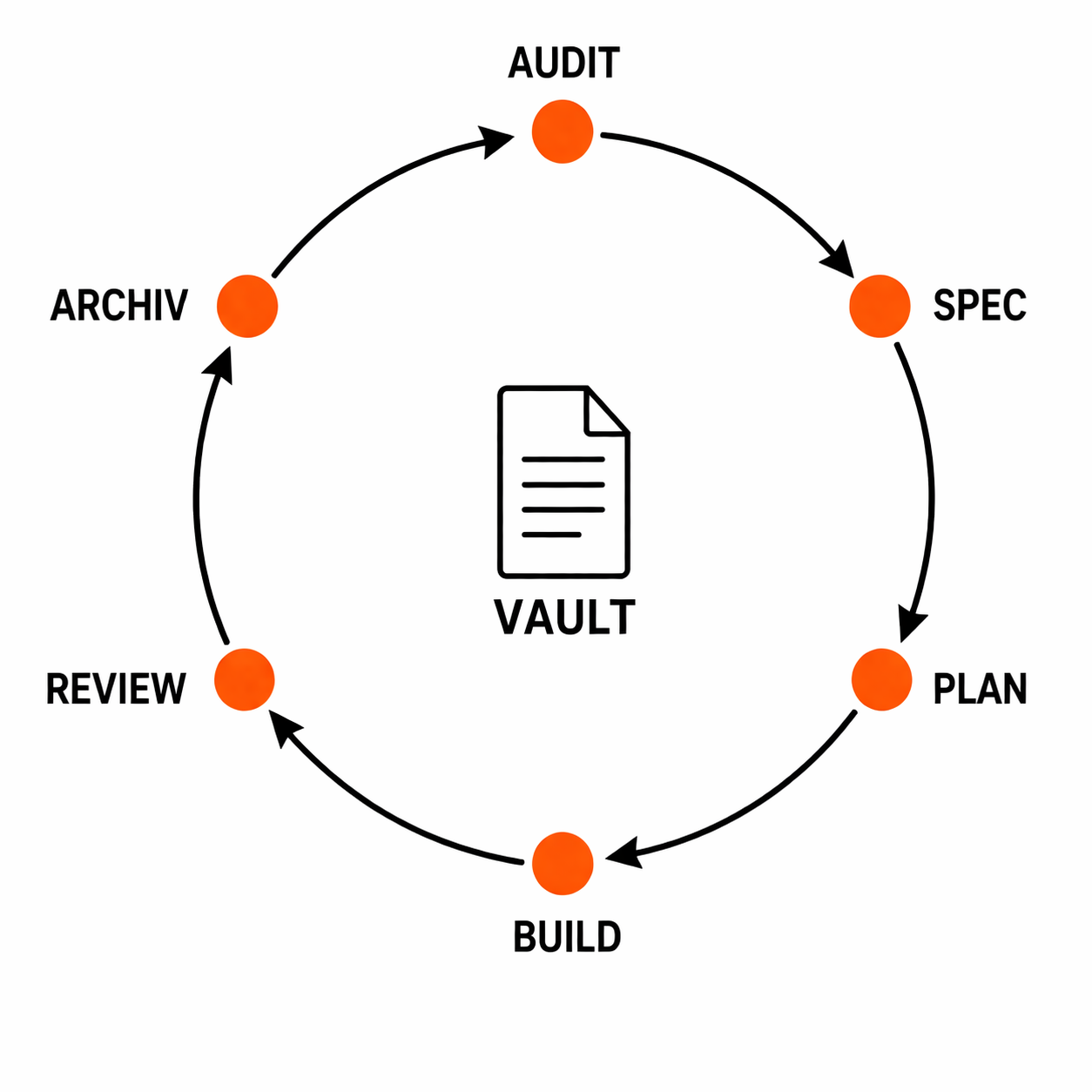

The Closed Loop

The truly new thing is not the speed. It is the closed loop.

/product-audit

→ finds: "Security headers missing"

→ generates: F-058-security-headers-cors-hardening.md

→ status: ready

/develop-feature F-058

→ reads spec, plans, implements

→ validates all acceptance criteria

→ status: review

/review-feature F-058

→ checks against standards and spec

→ status: done

/archive-feature F-058

→ spec moved to archive, worktree cleaned up

→ backlog updated

No media break. No ticket system. No "I'll write a Jira ticket and maybe in three sprints it'll get picked up". The audit finds a problem. The system generates a spec. The AI implements the solution. The review checks the result. All in one chain. All git-versioned.

In the loop, features are born from three sources:

Client and PO initiated

Classical. The product owner describes an idea. In the vault model, three sentences are enough. The cost of misinterpretation is low because building is cheaper than specifying. The AI implements, the PO evaluates the result instead of a description.

Audit generated

Product audits, UX audits, and security audits analyze the current state of the application automatically. For every issue found, the system generates a complete spec with acceptance criteria. No human writes these specs. The pipeline produces them.

Engineer initiated

While working on feature A, the AI notices an adjacent problem. A spec is created. The product owner decides whether to implement or discard. The loop stays the same.

In the actual project, twelve out of 25 features were generated by audits. Not requested, but derived from the application's state. The product owner made all 25 decisions. But he would never have specified those twelve audit-generated features manually. Not because he did not want them. Because no human can systematically check every heuristic, every security vector, and every engineering convention.

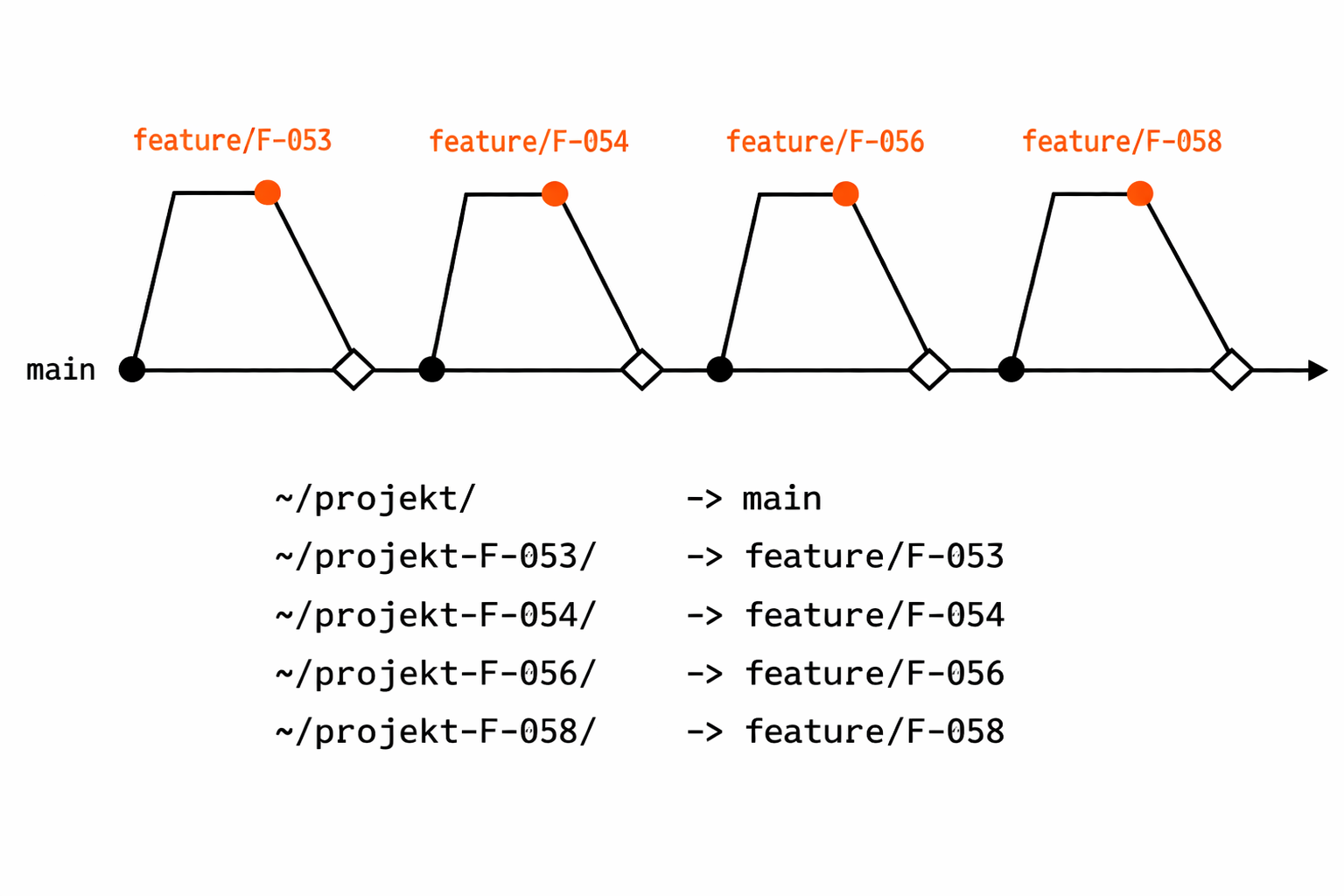

Parallelism: Four Sessions, Zero Merge Conflicts

A git repository has one working directory. One feature per session. That limits you to serial execution. Git worktrees break that limitation. Every feature gets its own directory with its own branch. Same git database, isolated working environments.

~/project/ → main (audits, reviews)

~/project-F-053/ → feature/F-053 (Custom Background)

~/project-F-054/ → feature/F-054 (Navigation Redesign)

~/project-F-056/ → feature/F-056 (File Upload Security)

~/project-F-058/ → feature/F-058 (CORS Headers)

The vault orchestrates the parallelism. Every spec declares its locked areas: which files and modules the feature touches. Before a new feature starts, the conflict check inspects all active specs and their locked areas. Overlap? The feature waits, or the product owner decides the order.

| Safe in parallel | Not parallel |

|---|---|

| Backend feature + frontend feature | Two features on the same component |

| New endpoint + infra change | Two database migrations |

| Independent UI pages | Overlapping shared services |

Peak day in the project: 17 features in 53 commits. Four AI sessions concurrently. Zero merge conflicts. Not by luck. Through locked areas in the vault that prevent conflicts before they arise.

Stateless, but Not Memoryless

Every AI session is stateless. The vault still does not make it memoryless. The AI context layer gives every new session the same rules, the same patterns, the same accumulated knowledge.

CLAUDE.md → rules, prohibitions, conventions

_meta/ai-context/

├── feature-workflow.md → slash commands, phases, gates

├── backend-reference.md → API patterns, three-file pattern

├── frontend-reference.md → component conventions, state

├── infra-reference.md → AWS CDK, deployment patterns

└── vault-context.md → cross-references vault ↔ code repo

01-product/feature-specs/active/ → open assignments

01-product/feature-specs/archive/ → concept reference for completed features

CLAUDE.md is the first file every session reads. It contains rules ("no code in this vault"), workflow rules ("before feature finalization: conflict check"), and conventions ("specs describe only what and why"). The reference files provide orientation: where do I find what? Which patterns apply? How do vault and code relate?

Session 1 and session 50 produce code with the same patterns. Not because the same person was involved. Not because the chat transcript was preserved. Because the vault encoded the decisions.

The Economic Inversion

When a feature costs 30 minutes to four hours instead of one to two weeks, the economics of specification invert.

| Model | Feature duration | Cost when discarded |

|---|---|---|

| Classical (1 developer) | 1 to 2 weeks | EUR 7,500 to 15,000 |

| Classical (team) | 1 to 2 sprints | EUR 30,000 to 60,000 |

| Vault-driven | 30 min to 4 hours | EUR 100 to 500 |

A specification workshop costs EUR 2,000 to 4,000. In the same time, the vault pipeline builds the feature. The client does not see a description. The client sees the result.

The implication is uncomfortable: building and discarding is cheaper than upfront specification. A discarded feature costs EUR 200. A specification workshop that would have prevented one feature costs EUR 3,000.

No loss of quality. The specs are sharper, not larger. Every criterion is testable. Every scope boundary is explicit. Less prose, more substance.

Quality Assurance: Built In, Not Bolted On

In the classical model, quality assurance is a separate step at the end. In the vault model, it is part of every phase.

| Phase | Quality gate |

|---|---|

| Spec creation | Codebase analysis, edge case checks, sharpening of acceptance criteria |

| Before implementation | Conflict check against all active specs (mandatory, not optional) |

| Implementation | Plan confirmation by product owner before code is written |

| After implementation | Every acceptance criterion individually verified with a note |

| Review | Engineering standards, spec drift analysis, visual test |

| Archiving | Branch must be merged. Spec becomes concept reference, not code reference |

In the actual project there were 23 automated review rounds with self-corrections. The AI corrected itself against the criteria in the spec. No manual bug fixing, no separate QA team. Anyone who cannot accept this slows the pipeline down to classical speed.

Numbers From Practice

Full-stack web app. Angular 21, .NET 10, PostgreSQL, AWS. One engineer, five days.

| Metric | Value |

|---|---|

| Completed features | 25 |

| Of which audit generated | 12 (48 percent) |

| Code commits | 90+ |

| Lines of code written | 26,000+ |

| Files changed | 275+ |

| Maximum parallelism | 4 AI sessions concurrently |

| Peak day (day 4) | 17 features, 53 commits |

| Automated review rounds | 23 |

| Merge conflicts | 0 |

| Discarded features | 1 (deliberately, over-engineering identified) |

No feature type was left out: UX (custom background, navigation redesign, progressive disclosure), security (PII encryption, CORS, token management, frontend hardening), infrastructure (production monitoring, database lifecycle), functionality (timezone-aware date picker, team calendar, file upload security).

Exponential Acceleration

Day 1: one feature. Day 2: zero features, only vault infrastructure. Day 3: seven features. Day 4: 17 features.

The pattern is not linear. The first two days invest in the vault: writing AI context, building slash commands, defining workflow rules. From day 3 onward the compounding effect kicks in. The audit generates specs that /develop-feature implements that /review-feature validates that /archive-feature archives. A closed pipeline.

Compounding is not a bonus. It is the architecture.

The investment in the vault does not pay off additively but multiplicatively. Every tool accelerates all subsequent features. Every convention prevents all subsequent mistakes. On day 4 the AI reads a vault that contains three days of accumulated knowledge. The specs are sharper. The patterns more stable. The conflict check more reliable.

Where It Does Not Work

The vault approach has prerequisites. Anyone who fails to meet them will be disappointed.

- Experienced engineers. The vault does not replace expertise, it amplifies it. Whoever does not understand architecture, domain, and customer needs feeds the vault with the wrong decisions. The AI then amplifies the wrong patterns.

- Stable codebase with patterns. The AI derives the technical implementation from existing patterns. Without consistent patterns it improvises differently for every feature. A codebase without tests, without conventions gives the AI nothing to derive from.

- Specs that describe what, not how. Whoever prescribes component names constrains the AI instead of guiding it. This requires trust: the AI knows the codebase. The human knows the customer problem.

- Discipline at the gates. Every status transition has prerequisites. Every conflict check is mandatory. No "good enough". The speed comes from the process, not from cutting corners on it.

- A decisive product owner. 17 features in a day means 17 decisions. Accept, reject, or modify. Whoever needs three meetings to release a feature slows the pipeline.

- Investment in the first days. The vault, the slash commands, and the AI context have to be built. Days 1 and 2 produce no feature output. Anyone who wants to see features on day one will fail.

Scaling in Two Dimensions

Vertical: more features per engineer. Parallel worktrees, audit-generated specs, and automated reviews multiply the output of a single engineer. 25 features in five days instead of three to five in a sprint.

Horizontal: more engineers per vault. The same vault steers multiple engineers. Each works on their own worktrees, each follows the same slash commands, each checks against the same specs. The conflict check prevents parallel streams from colliding.

The same model works with seven engineers on an enterprise platform with 100,000+ lines of code just as it does with one engineer on a customer web app. The vault structure does not change. The slash commands do not change. The speed per engineer stays the same.

More Documentation, Less Writing

The paradox from the start dissolves: the vault is not documentation. Documentation describes what happened. The vault determines what happens next. It is not a logbook. It is a steering system.

AI development without a steering system is fast chaos. A vault turns that chaos into a reproducible process. Specs define the what. Slash commands define the process. Audits find automatically what needs to be improved. Gates secure the quality. Worktrees enable parallelism. Everything versioned, everything traceable.

No ticket system. No standup. No sprint planning. Instead: a vault, a code repo, a product owner who decides, and an AI that delivers.

The question is not whether your AI can program. The question is whether tomorrow it still knows what it learned yesterday. Whoever optimizes prompt engineering optimizes the session. Whoever builds a vault optimizes every session that will ever follow. And that is why one engineer with AI can deliver the output of a team without sacrificing the quality of a team.

Related article Propose & Curate: When Building Becomes Cheaper Than Specifying How Guided AI Development shifts the Product Owner role from client to curator.